What would you have done if you had been caught cheating on an essay at university?

Unless your immediate response was “sue the bastards, of course”, then you probably don’t have the necessary gumption to survive in academia in the post-truth era. This week, US student Orion Newby successfully sued Adelphi University, NY, for their disciplinary action against him, after the university’s AI detection tools flagged the student’s work repeatedly for plagiarism. You, my perspicacious reader, will not need me to tell you this is a groundbreaking case that will have huge repercussions for universities around the world and, indeed, that endangered species – the essay.

Clarendon feels great sympathy for universities, who are having to adapt to the new world of LLMs. We might not have seen the transformative advances in medicine, energy and economic growth, but we can at least be grateful for the endless online AI slop, the decimation of the graduate job market and the technology that allows the poor undergraduates, who are witnessing this decimation from the cocoon of their debt hole, to crank out a decent 3,000 word essay with a few strikes of their keyboards. That’s progress, my friend.

Clarendon has great sympathy for Orion Newby too. The AI detection tools aren’t effective, despite their promises of up to 99% accuracy. Most AI detection tools perform somewhere between 30-70%, a wide variance that reflects their inaccuracy and their inconsistency. This is before students add in mistakes and idiosyncrasies to throw the detection apps off. Don’t worry – if your student is too lazy to do this, there are apps that will do it for them. Furthermore, they will always be playing catch up to the LLMs, whose models change much quicker than the detection apps, hence the inconsistency with the same app on the same piece of work a couple of months apart.

What to do?

You’ll be pleased to know, dear reader, that Clarendon has joined the AI in Education at Oxford University (AIEOU) research group, so that we can be at the cutting edge of these important questions.

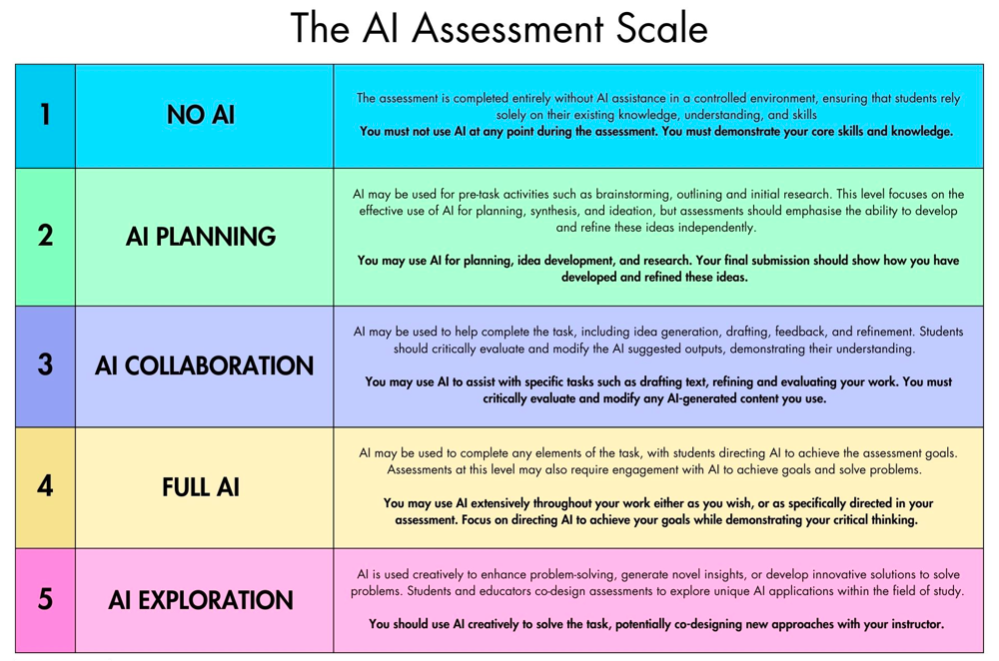

This week, we joined a webinar on the AI Assessment Scale led by Dr. Mike Perkins. This scale works on a couple of assumptions: AI detection tools are faulty and students need to be using AI to ready themselves for the job market. The assessment scale aims to square these two assumptions with the maintenance of ethics and integrity by setting work on a scale of AI integration in a piece of work.

Level 1 means no AI use at all

This level needs to be done under a controlled environment: an exam or a presentation / debate in class. This level is the best for maintaining academic integrity, but it’s not fair on all students, nor is it particularly representative of employed work, which tends to be more project based.

Level 2 allows for AI in the planning and research phase

When a student hands in their work, they must show their prompt history to show how they directed and finessed the AI. A problem with this can be AI’s accuracy for research, at least presently. This is a helpful level, letting students know they will be assessed on the thoughtfulness, insightfulness and nuance of their prompts, as well as the evaluation of the research.

Level 3 allows for extensive AI use, but with a critical evaluation at the end

Students must also modify all AI generated content. This level is useful, but requires a lot of trust from the students, as they could use AI to write the self-reflection and modify the content. Still, as Dr. Mike Perkins said, at some stage we are going to have to trust the students. We must make sure we are communicating to students the long term pitfalls for them on over-reliance on AI. We should be communicating this to students at every step of their education. AI use should come with an academic health warning.

Level 4 is no holds barred AI use

Again, students will be marked on prompts. Clarendon doesn’t approve of this level so much. Quite apart from the obvious danger of students using one AI to generate and another AI to prompt, it seems a dereliction of brain power.

Level 5 is creative use of AI to demonstrate understanding

Clarendon loves this level. It’s helpful for students in building AI skills. AI is so easy to use, it doesn’t need students to be coders. And, it encourages lateral thinking.

—

The webinar from Dr. Mike Perkins left us with a feeling of ambivalence. We were heartened that professors like Dr. Perkins are thinking so clearly and thoughtfully about this important issue. Clarendon also thought that there was much to recommend about the scale itself and that, if you communicated to students how the scale worked and the dangers of over-reliance on AI, it might positively affect student behaviours.

However, we were left with a distinct feeling that this isn’t a utopian scenario, this isn’t the ideal education you would wish for you child. We are having to adapt to technology that no one asked for.

It’s incredibly naive of us to expect anyone to still be reading at this point, but we’d love to hear what you think if you are.